Introduction

This has been a week of disruptions from Rishi finally throwing in the towel and calling a snap election (cue me panic applying for a proxy vote), to me succumbing to such an extreme bout of homesickness it took all my willpower not to sprint to JFK and fling myself onto the next available plane to England.

Anyway on with the notes.

Things I worked on

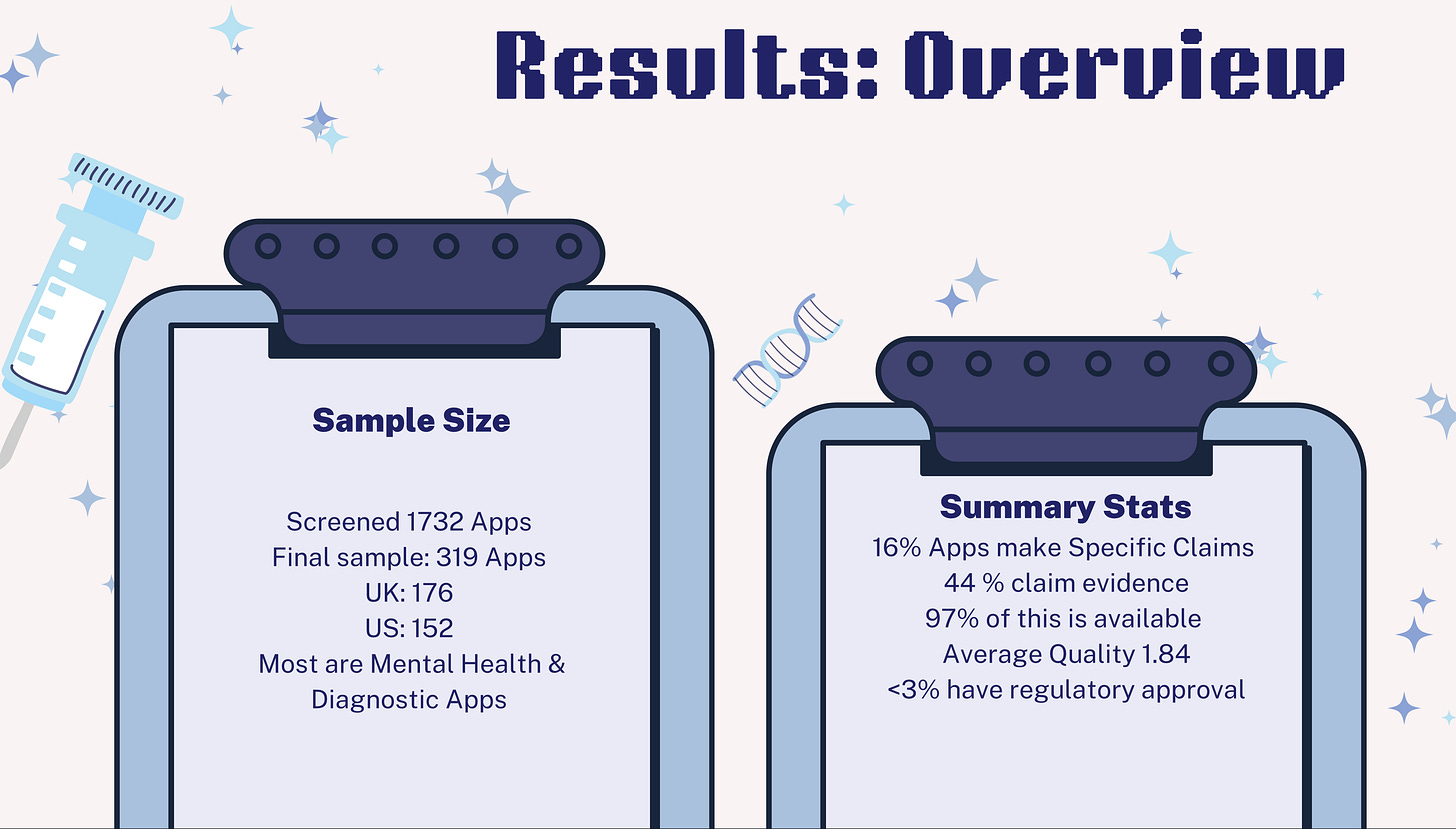

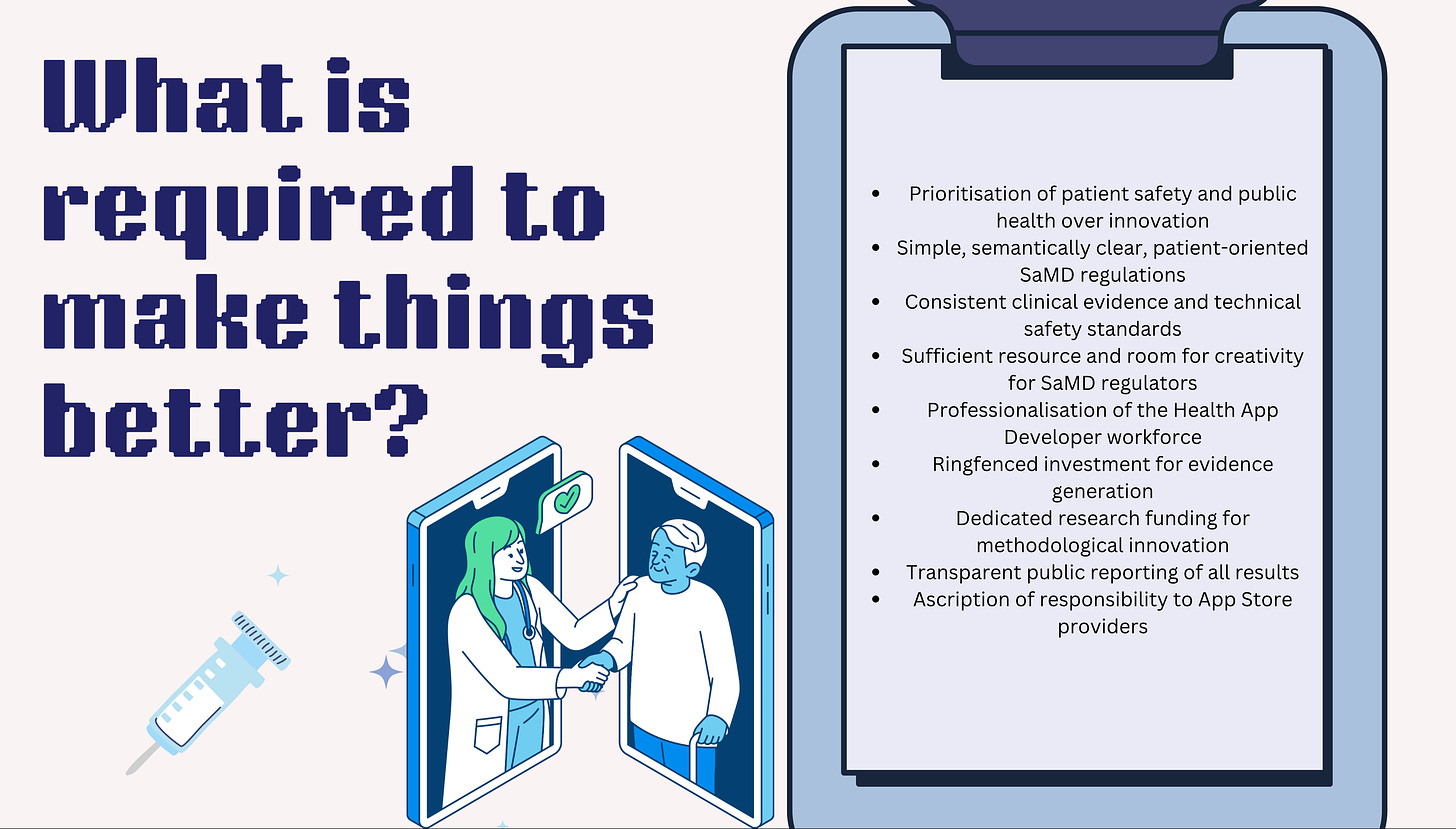

Apps Paper. You may have seen that I have been working on a paper auditing the quality of evidence available to support the efficacy claims of apps available on the Apple App Store. Well this week, I finished the full final draft. It’s now with my co-authors for review, refinement, and improvement and then hopefully we’ll be able to pre-print it soon. For now see “things I did” for the slides I used to present this paper earlier in the week.

Brain implants papers x 2. The DEC has a long-running project, collaborating with the Yale Computer Science Department, looking at the ethical implications of brain implants. There are now two full papers ready in draft form - one is an overview paper, and one focuses more on technical design. Both are currently with me for editing and review so this was a major focus of the week.

HASTE policy workshop. In collaboration with MIT, I am organizing a policy workshop on AI in healthcare entitled “Health AI Science, Technology, and Ethics” aimed at students from high-school to PhD. The intention is to give students insight into the policy-making process, focusing on how big ticket items like the EU AI Act and the US Executive Order apply to AI in healthcare, and what the outstanding regulatory/ethical issues are. The workshop will take place over 3 days in August. The first day will be panel discussions and talks, the second day will be all participation, students will be divided into groups with one mentor per group and given various policy challenges to tackle and respond to. The third day (well, morning really) will be a feedback session and discussion of next steps. The hope is to - at the very least - publish a white paper out of the workshop and see if new fresh ideas arise from the students. So, this week, I’ve been doing a lot of prep - trying to find sponsors, booking venues, creating a webform for people to sign up etc. Thankfully, I have help from our wonderful DEC program management team.

Thesis paper 1. As my PhD thesis is a monograph (i.e. it’s like a book that builds up sequentially) almost none of it is published outside of my thesis which is here. I am in then process of turning each of the individual chapters into standalone publishable papers. This week I was working on putting the finishing touches to the first paper “AI and the NHS: it’s (more than) complicated” which aligns to chapter 4 (or the first empirical chapter) in my thesis. It essentially takes the NASSS framework developed by Trish Greenhalgh and colleagues and applies it to algorithmic clinical decision support software (ACDSS), highlighting why it is so much harder to get ACDSS to work in the NHS compared to basic rudimentary forms of clinical decision support software (CDSS). I’m also serialising the whole thing (including the theory chapters) as individual posts for this newsletter as I know sometimes reading jargon filled academic papers is a chore. The first post that outlines the difference between command and control approaches to technology implementation to design based approaches, will be coming this week.

Things I did

Postdoc Symposium. I gave a ten minute talk at the Yale Postdoc Symposium, presenting our Apps paper “An app a day will (probably not) keep the doctor away”. My slides are here, or there is a preview below. I am also planning to record the full version of this talk and post it later this week. This “evidence crisis” as one of the audience members termed it, cannot be allowed to continue when it is exposing so many people to risk of harm. I also got a prize for being in the top five short talks, and so I am now the proud owner of some excellent Yale Postdoc “swag.”

Preprinted our paper ‘A Robust Governance for the AI Act: AI Office, AI Board, Scientific Panel, and National Authorities’. You can access the full working paper here, or the abstract is as follows:

“Regulation is nothing without enforcement. This particularly holds for the dynamic field of emerging technologies. Hence, this article has two ambitions. First, it explains how the EU´s new Artificial Intelligence Act (AIA) will be implemented and enforced by various institutional bodies, thus clarifying the governance framework of the AIA. Second, it proposes a normative model of governance, providing recommendations to ensure uniform and coordinated execution of the AIA and the fulfilment of the legislation. Taken together, the article explores how the AIA may be implemented by national and EU institutional bodies, encompassing longstanding bodies, such as the European Commission, and those newly established under the AIA, such as the AI Office. It investigates their roles across supranational and national levels, emphasizing how EU regulations influence institutional structures and operations. These regulations may not only directly dictate the structural design of institutions but also indirectly request administrative capacities needed to enforce the AIA.”

Preprinted our paper “The Effects of AI on Street-Level Bureaucracy: A Scoping Review”. You can access the full working paper here, or the abstract is as follows:

“The use of artificial intelligence (AI) by “street-level bureaucrats” - a term coined by Michael Lipsky to explain the public servants who distribute public benefits and sanctions using professional discretion in interactions with the public - has been expanding. By adopting the structure set forth in Lipsky’s 2010 book, Street Level Bureaucracy, we analyze the effects of AI on the characteristic facets of their work: discretion, working conditions, and patterns of practice. According to more than 70 works gathered from across the fields of justice, policing, education, and social work, we cover how each of these has been or will likely be affected by AI and draw out the ethical implications associated with these changes. We find that AI has mixed effects on frontline discretion - at times supporting and at times limiting it. The literature suggests changes in working conditions, specifically in the form of a shift in resource constraints (from lack of time to inadequate training), technologization of performance measures (which yield few discernible advantages over traditional assessment methods and lead to privacy concerns), and client interactions influenced by their perceptions of AI (resulting from personal and contextual factors). While there is limited literature on how most patterns of practice are affected by AI use, we find that rationing at the level of clients has, across many domains, been made more efficient by the use of these tools, while exacerbating bias, due to biased training data and human-computer interaction. From the literature, we explain the potential effects of AI on three other patterns of practice - rationing at the level of services, securing client compliance, and reconceiving work and clients.”

Published our paper “Regulation by Design: Features, Practices, Limitations, and Governance Implications”. The full paper is published in Minds and Machines here, or the abstract is as follows:

“Regulation by design (RBD) is a growing research field that explores, develops, and criticises the regulative function of design. In this article, we provide a qualitative thematic synthesis of the existing literature. The aim is to explore and analyse RBD’s core features, practices, limitations, and related governance implications. To fulfil this aim, we examine the extant literature on RBD in the context of digital technologies. We start by identifying and structuring the core features of RBD, namely the goals, regulators, regulatees, methods, and technologies. Building on that structure, we distinguish among three types of RBD practices: compliance by design, value creation by design, and optimisation by design. We then explore the challenges and limitations of RBD practices, which stem from risks associated with compliance by design, contextual limitations, or methodological uncertainty. Finally, we examine the governance implications of RBD and outline possible future directions of the research field and its practices.”

Peer-reviewed 5 articles. Peer-review is such a tricky thing to balance, I get at least four to six review requests every week. It is simply not possible to accept all the requests, and yet I know how annoying it is when you’re on the other side waiting for review. I try to compromise by accepting one a week and thus doing four a month, depending on how much time is available but it’s not always easy and the guilt is real. Anyway, this week I reviewed five papers for Minds and machines, AI and society x 2, BMC medical ethics, and the Lancet Digital Health.

Things I thought about

Mental competency in the digital age. Given all the discussion about extended reality, manipulation by algorithms online, vulnerability to mis/disinformation and more, I am increasingly concerned that our legal and ethical system is not well equipped to understand how ‘mental competency’ (very broadly defined) is impacted by digital technologies; how this varies between people and in different circumstances; and what the implications might be. If we have, for example, legal and ethical frameworks for dealing with situations when a person acted out of character due to inebriation or a mental health problem, is it time that we developed similar frameworks for when a person acts out of character due to ‘digital manipulation’?

The need for specialist digital agencies. I am getting slightly tired of having to constantly write or read papers that try to shoehorn new responsibilities related to digital technologies (most notably AI) into the remit of established government agencies (e.g., MHRA, FDA etc.) I am no longer convinced that this is the best solution. These agencies are already short on resources and time, and - in many instances - do not have the skills necessary to properly address the complexities of digital tech. In the past there has been resistance to suggestions re: creating new governmental agencies that focus exclusively on the regulation of AI, or digital tech in health or other industries. I think this is in part because in Europe, the UK, and the EU, the regulatory landscape is already so fragmented. But I think it’s clear to everyone now that “digital” is here to stay, and maybe we should accept that agencies established to (e.g.,) regulate drugs are not well suited to regulating (e.g.,) algorithms. Creating a neat divide between “analogue regulators” and “digital regulators” might actually be simpler than the current solution even if it does result in further fragmentation.

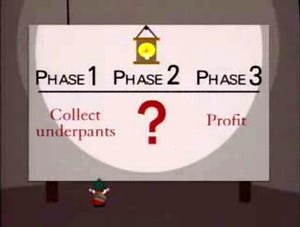

Ludditism vs. critical scepticism. Recently, I’ve seen people call me - and others who do similar types of scholarship - a “Luddite.” I don’t normally pay attention to such comments made on the internet because, quite honestly, who has the time, but in this instance it did make me realise that maybe people don’t understand it is possible to take a nuanced position on digital technologies (particularly healthcare technologies) that is neither “AI/apps/digital has cured death” nor “AI/apps/digital is bringing about the apocalypse.” A Luddite, by definition, is someone who is opposed to new technology or ways of working. I am very much not opposed to new technology and neither are my fellow Science and Technology Studies scholars. I am actually very enthusiastic about technology, and its potential to improve the world in which we live and - in particular - to make healthcare better. What I am opposed to is technological determinism or policy attitudes rooted in the assumption that just by implementing technology things will automatically improve. Think, for example, phrases in policy documents that say things like “we will improve healthcare outcomes through the use of AI” but say nothing about how this will be achieved, nor what exactly is meant by “improved outcomes” (for whom, according to what definition), or even what are the foundational requirements needed to make this possible. To me such statements are infused with the same logic as that of the South Park underpants gnomes (see below). I also think it’s dangerous because if we do not think in advance of how to ensure the implementation of new technology goes right there’s a pretty good chance it will go wrong (think care.data, or the national programme for IT in the NHS etc. etc.) resulting in chilling effects (i.e., people abandoning the technology because it has either disappointed or caused harm) and subsequent opportunity costs. Thus, most of the time it is this unquestioning or overly-simplifying attitude that I am criticising, it’s never really the idea of the technology itself. It is also why I call myself a sceptical optimist with regards to technology implementation and adoption: I think the benefits are enormous and they can be realised, but only if we proactively think about how to capitalise on the opportunities whilst mitigating the risks, and I think we currently (as a society, and definitely as policymakers) think insufficiently deeply about this, which makes me sceptical that we will achieve the benefits.

(A selection of things) I read

Abbasian, Mahyar, Elahe Khatibi, Iman Azimi, David Oniani, Zahra Shakeri Hossein Abad, Alexander Thieme, Ram Sriram, et al. “Foundation Metrics for Evaluating Effectiveness of Healthcare Conversations Powered by Generative AI.” Npj Digital Medicine 7, no. 1 (March 29, 2024): 82. https://doi.org/10.1038/s41746-024-01074-z.

Alhosani, Khalifa, and Saadat M. Alhashmi. “Opportunities, Challenges, and Benefits of AI Innovation in Government Services: A Review.” Discover Artificial Intelligence 4, no. 1 (March 4, 2024): 18. https://doi.org/10.1007/s44163-024-00111-w.

Boag, William, Alifia Hasan, Jee Young Kim, Mike Revoir, Marshall Nichols, William Ratliff, Michael Gao, et al. “The Algorithm Journey Map: A Tangible Approach to Implementing AI Solutions in Healthcare.” Npj Digital Medicine7, no. 1 (April 9, 2024): 87. https://doi.org/10.1038/s41746-024-01061-4.

Boylan, Sally, Catherine Arsenault, Marcos Barreto, Fernando A Bozza, Adalton Fonseca, Eoghan Forde, Lauren Hookham, et al. “Data Challenges for International Health Emergencies: Lessons Learned from Ten International COVID-19 Driver Projects.” The Lancet Digital Health 6, no. 5 (May 2024): e354–66. https://doi.org/10.1016/S2589-7500(24)00028-1.

Hatef, Elham, Hsien-Yen Chang, Thomas M Richards, Christopher Kitchen, Janya Budaraju, Iman Foroughmand, Elyse C Lasser, and Jonathan P Weiner. “Development of a Social Risk Score in the Electronic Health Record to Identify Social Needs Among Underserved Populations: Retrospective Study.” JMIR Formative Research 8 (March 12, 2024): e54732. https://doi.org/10.2196/54732.

Hennrich, Jasmin, Eva Ritz, Peter Hofmann, and Nils Urbach. “Capturing Artificial Intelligence Applications’ Value Proposition in Healthcare – a Qualitative Research Study.” BMC Health Services Research 24, no. 1 (April 3, 2024): 420. https://doi.org/10.1186/s12913-024-10894-4.

Liu, Siru, Allison B McCoy, Josh F Peterson, Thomas A Lasko, Dean F Sittig, Scott D Nelson, Jennifer Andrews, et al. “Leveraging Explainable Artificial Intelligence to Optimize Clinical Decision Support.” Journal of the American Medical Informatics Association 31, no. 4 (April 3, 2024): 968–74. https://doi.org/10.1093/jamia/ocae019.

Mehandru, Nikita, Brenda Y. Miao, Eduardo Rodriguez Almaraz, Madhumita Sushil, Atul J. Butte, and Ahmed Alaa. “Evaluating Large Language Models as Agents in the Clinic.” Npj Digital Medicine 7, no. 1 (April 3, 2024): 84. https://doi.org/10.1038/s41746-024-01083-y.

Shaw, James, Joseph Ali, Caesar A. Atuire, Phaik Yeong Cheah, Armando Guio Español, Judy Wawira Gichoya, Adrienne Hunt, et al. “Research Ethics and Artificial Intelligence for Global Health: Perspectives from the Global Forum on Bioethics in Research.” BMC Medical Ethics 25, no. 1 (April 18, 2024): 46. https://doi.org/10.1186/s12910-024-01044-w.

Singhal, Aditya, Nikita Neveditsin, Hasnaat Tanveer, and Vijay Mago. “Toward Fairness, Accountability, Transparency, and Ethics in AI for Social Media and Health Care: Scoping Review.” JMIR Medical Informatics 12 (April 3, 2024): e50048. https://doi.org/10.2196/50048.

Vezyridis, Paraskevas. “‘Kindling the Fire’ of NHS Patient Data Exploitations: The Care.Data Controversy in News Media Discourses.” Social Science & Medicine 348 (May 2024): 116824. https://doi.org/10.1016/j.socscimed.2024.116824.

Do you remember the NHS Apps Library? https://digital.nhs.uk/services/nhs-apps-library